Many clients include quality checks in surveys to make sure respondents are engaged and are answering honestly. However, many of these checks identify false positives, which often mean valid, engaged respondents are thrown out of the sample. How can we reduce false positives?

First and foremost, don’t use a one strike and you’re out rule. Respondents need to be given the benefit of the doubt and not removed because they fail just one quality check. A respondent can easily be momentarily distracted and fail a quality check. This doesn’t make them a poor respondent…it makes them human! The key is to look for a pattern of questionable behavior with multiple failures.

Even on individual checks there is a need to avoid the one strike and you’re out rule. One popular check is to eliminate people who claim to participate in low incidence activities or purchase low incidence categories. There is a good chance some respondents could choose one activity or category, so it is better to use two plus activities/categories as the quality check. Also, the list of activities and categories needs to be carefully devised so items aren’t related to one another. When activities/categories are related it increases the odds that people saying two plus activities/categories can be valid answers. For example, someone attending an opera in the past year may be more likely to have also attended the ballet in the past year.

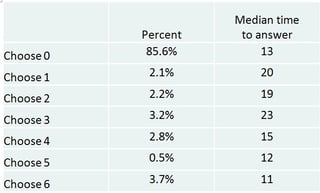

Red herrings are another popular check. This is where fake items are included in the answer list. In some instances all answer choices might be red herrings. It is difficult to devise fake items that aren’t confused with real items. For example, a fake brand may be very similar to a real brand or could even be a valid regional brand. On some recent research respondents were asked to pick which of six fake items they had purchased in the past six months. We found that respondents choosing 1-3 items were spending more time answering the question than those choosing 4+ items. Based on the time to answer, the recommendation was to set the failure criteria at 4+ items.

Clients also often use open ended responses as a quality check. In this check nonsense answers, such as a random set of letters, are identified. This check can be tricky though. Some respondents simply don’t like open ended questions but give perfectly valid answers on all other questions. Also the number of failures can vary widely depending on the type of open ended question and the location of the question in the survey. Often once respondents who fail other checks are eliminated it reduces the number of nonsense answer on open ended questions to negligible levels. For example, in recent research we went from 6.8% nonsense answers to 1.7% after removing those who failed other quality checks.

Bottom line, carefully design quality checks and require multiple failure points to avoid getting rid of false positives.

TO CONDUCT EFFECTIVE ONLINE RESEARCH, YOU SHOULD EXPLORE A NUMBER OF RESEARCH TECHNIQUES.